Artificial Neural Network tutorial

This article is kindly shared by Jen-Jen Manuel

In this activity, we try using the artificial neural network or ANN toolbox for Scilab in object classification. A neural network is a computational model of how the neurons in our brain work. This is an alternative to linear discriminant analysis or LDA in pattern recognition. In neural network, a pattern is learned through example. The learning process may take some time but once a pattern is learned, a faster recognition process is expected.

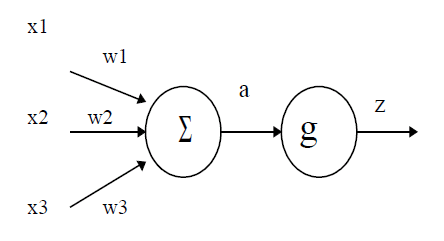

Below is an example of an artificial neuron modeled by McCulloch and Pitts in 1943. A neuron receives weighted inputs wixi (where i = 1, 2, 3 for the example shown below) from other neurons. These weighted inputs are then added to yield a and then acts on an activation function g. The output z is then fired to other neurons.

An artificial neuron.

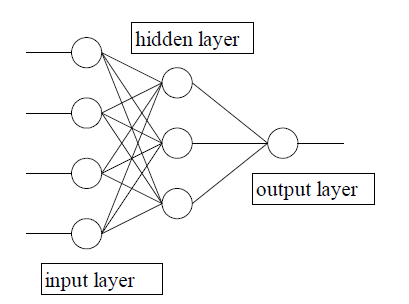

A neural network is formed by connecting many neurons. An example of this is shown below. A typical neural network consists of 3 layers: input layer, hidden layer and output layer. The input layer can be a set of features extracted from the objects to be classified. The hidden layer then acts on such set and then passes the result to the output layer

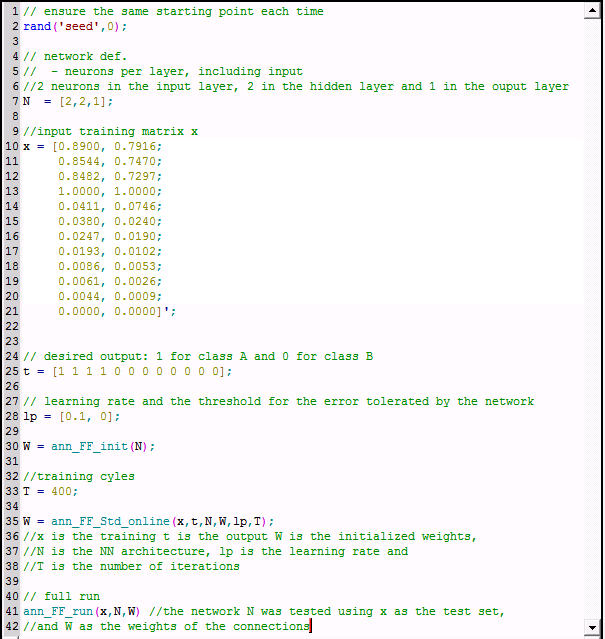

We are to utilize the ANN toolbox in object classification. There are two classes of objects, Aand B, to be classified based on two features extracted. The code used is shown below. Take note that the input training matrix x consists of 12 rows with 2 columns since there are 12 objects to be classified based on 2 features. The desired output t represents the class of the object classified such that a value equal to 1 means class A and a value equal to 0 means class B. Take note that the values for both sets of features are normalized.

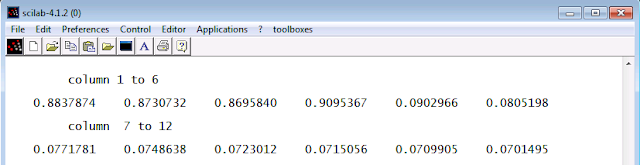

The parameters learning rate and training cycles can be tweaked. The results for different combinations of values of learning rate and training cycles.

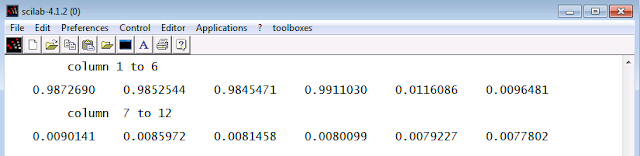

learning rate = [0.1, 0], T = 400

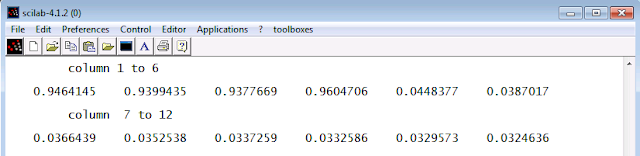

learning rate = [2.5, 0], T = 400

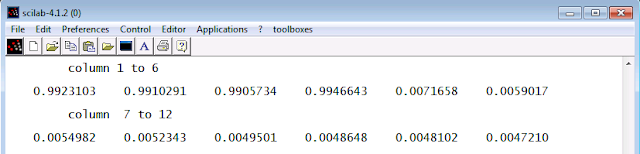

learning rate = [0.1, 0], T = 1000

learning rate = [2.5, 0], T = 1000

It is obvious from the results shown that as the learning rate is increased, the more closer the obtained values for the output are. Also, increasing the number of training cycles results to values for the output that are closer to the desired ones. It turns out that the predictions neural network made are 100% accurate. Using ANN toolbox in classification is easier compared to using LDA for many reasons.